When organizations attempt to deploy standard Large Language Models (LLMs) into their customer service or operational workflows, they face three massive barriers: data security, hallucination liabilities, and a complete lack of financial observability.

At Qubitly Ventures we view AI as an operational engine. Building on our previous research into Multi-Agent SRE Blueprints and Prompt Injection Mitigation, we have developed a proprietary framework for deploying autonomous service agents that actually work in highly regulated environments.

We call this the S.O.A.R. Framework (Secure Operations & Autonomous Routing).

To demonstrate how this framework resolves the enterprise customer service crisis, let's examine a recent implementation by Qubitly: a high-stakes, multi-region operational engine for the luxury real estate market across the UAE, UK, and Singapore.

The Problem: When "Good Enough" is a Legal Liability

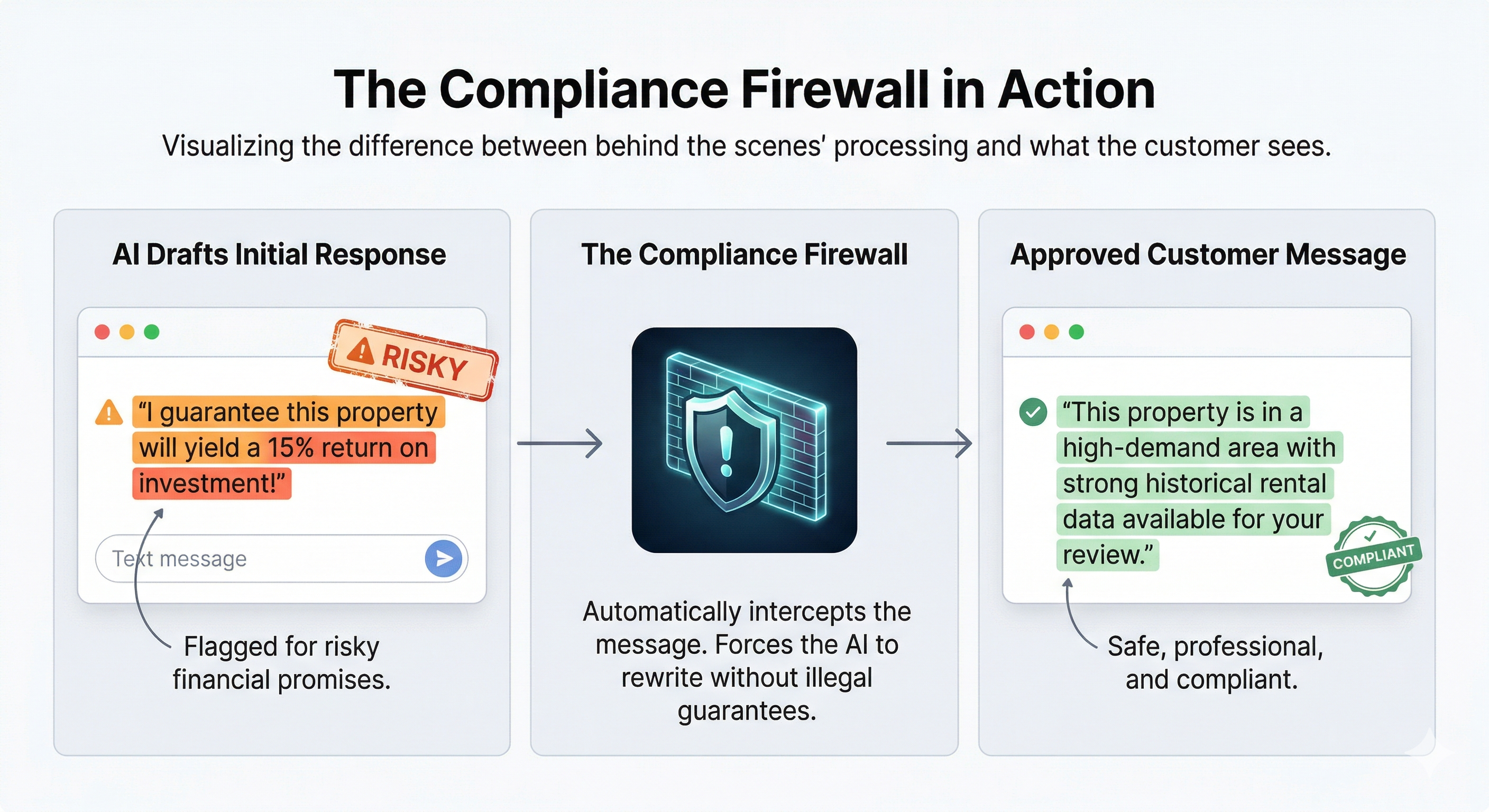

Customer service in high-value sectors (real estate, banking, healthcare) cannot afford AI hallucinations. If an AI agent illegally guarantees a 15% ROI on a Dubai property, or exposes a VIP client's Personally Identifiable Information (PII) to a third-party API, the resulting regulatory fines (like RERA violations in the UAE) far outweigh the cost savings of automation.

Enterprises need systems that can autonomously route requests, query internal databases, and logically peer-review their own work before speaking to a customer.

The S.O.A.R. Framework in Action: The Tri-Market Real Estate Engine

We architected a multi-agent system designed specifically to handle complex operational routing, heavily guarded by dedicated compliance agents.

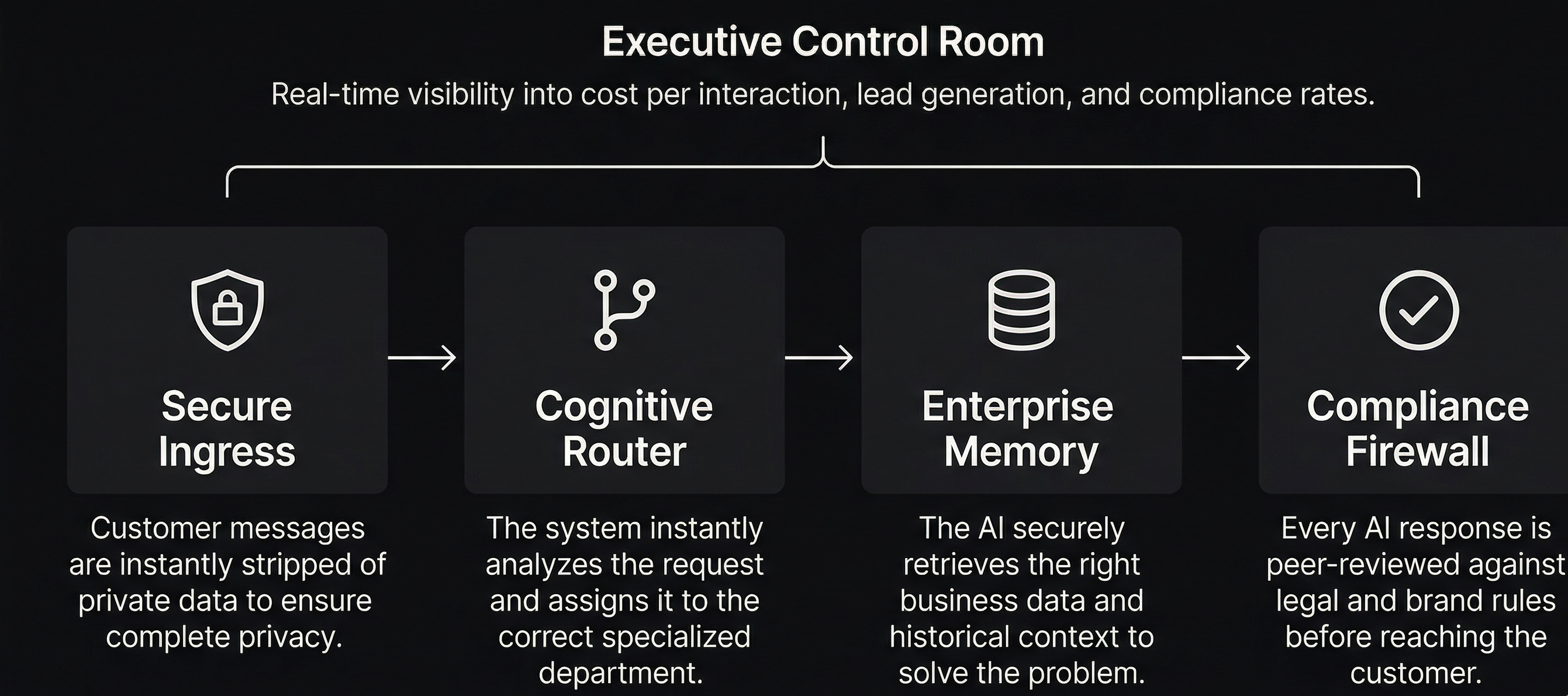

Here is how the S.O.A.R. framework maps to this real-world implementation:

1. Zero-Trust AI Architecture & Contextual Memory Retrieval

Enterprise data governance requires strict privacy-preserving AI. Instead of exposing raw user data to the model, the system anonymizes all interactions at the edge. To ensure the AI feels personal without compromising PII, we utilize stateful, vectorized memory. This allows the system to securely retain long-term semantic context across multiple sessions while maintaining strict compliance with global data privacy regulations.

2. Dynamic Agentic Orchestration & Semantic Routing

Rather than forcing a single, monolithic LLM to process every request, we deploy a distributed network of specialized micro-agents. A front-line cognitive router analyzes incoming messages for semantic intent. It then dynamically orchestrates the workflow, routing the user to the precise downstream agent best suited for the task. This reduces token latency, prevents context window degradation, and optimizes overall compute costs.

3. Deterministic Guardrails & Agentic Supervision

To eliminate the risk of hallucinations and protect enterprise brand reputation, the system cannot rely on standard prompting alone. We implement active, deterministic guardrails governed by autonomous supervisory agents. Our "Sentinel" models act as a real-time compliance firewall, executing self-reflective peer reviews and automated correction loops to ensure every output strictly adheres to legal and operational guidelines before the customer ever sees it.

4. Full-Stack LLMOps & Dual-Layer Observability

You cannot scale what you cannot measure. Moving beyond basic conversational analytics, we integrate comprehensive AI telemetry into the core of the engine. This dual-layer observability stack serves two masters: it provides engineering teams with deep architectural tracing to monitor system latency and API reliability, while simultaneously feeding live business intelligence dashboards. This gives the C-Suite total visibility into real-time unit economics, deterministic compliance rates, and exact ROI per interaction.

The Business Impact

By shifting from a single-prompt chatbot to a multi-agent operational engine, the results are deterministic and highly measurable:

- 100% Brand Safety: Marketing and legal liabilities are mathematically reduced to near-zero via the Sentinel correction loops.

- Granular Financial Control: FinOps teams can trace exact LLM token spend back to a specific, anonymized user ID.

- Seamless Scalability: Because the system degrades gracefully (catching router exceptions without dropping the user), it can handle thousands of concurrent complex requests across multiple global markets.

Stop Experimenting. Start Operating.

The technology to automate complex, high-risk customer operations is already here. The difference between a failed AI experiment and a transformative operational engine is the architecture.

If your enterprise is ready to move beyond basic chatbots and deploy secure, highly observable multi-agent systems that protect your brand and integrate directly with your existing data, it’s time to architect a real solution.

👉 Contact us to schedule a review of your customer service operations: contact@qubitlyventures.com